This article may contain one or more independently chosen Amazon affiliate links. See full disclosure.

I often receive a standard response when I write about cooking myths or food myths here on Culinary Lore. A food blogger performed an experiment on this, which proves you’re wrong (or correct, as the case may be). I don’t often include a comment field in my articles, but some readers are passionate enough about the subject to take the time to email me their thoughts. Responding, however, presents a problem.

The Practicality Gap: Before we dive into the science, we have to ask: Is the effort worth the squeeze? Modern “food science” influencers often turn a 15-minute meal into a 3-hour ordeal for a 5% improvement in texture. If a “scientific” hack makes your life harder without a result you can actually taste in a blind test, it’s not science—it’s a hobby.

The Expert’s Dilemma: When Readers ‘Debunk’ with Anecdotes

First, the readers telling about food experiments conducted by food bloggers are assuming I’m not aware of the “experiment.” They are usually aware of these experiments because they read about them in articles. If they found such sources, you can be sure I did as well. After all, it’s just a simple Google search. But, trying to tell someone after the fact that you were aware of a certain source but chose to ignore it is difficult. They can always assume you are lying and “did not do your research.”

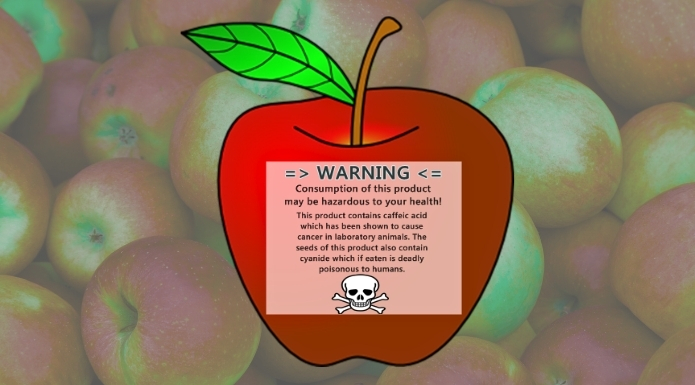

Of course, I have failed to uncover valid research in the past, so any reader is justified in bringing up such research. However, not all research is created equally. I failed to uncover newer scientific evidence on the question of whether apples keep potatoes from sprouting, leading me, initially, to an incorrect conclusion.

The “Research” Fallacy In the age of the instant answer, we have confused research with searching. > * Searching is typing a query into Google and skimming the first result that agrees with you.

- Research is the exhaustive process of vetting sources, understanding methodology, and looking for evidence that disproves your own bias.

Doing a “quick Google search” and finding a blog post isn’t research, it’s just finding an ally for your assumption.

The Futility of Explaining the Scientific Method

Assuming that the reader does believe that I knew about the experiment, how do I explain the reasons why I chose to not include the sources in my article? I am left pondering the futility, if not the pretentiousness, of explaining the basic scientific method to them. But that is often what this all boils down to: Proper experiments are done by trained scientists, not by food bloggers (sorry, fellow writers).

Let me give you an example. I asserted, in this article about marinades, that a marinade will not do much to tenderize meat. Suppose that a reader finds an article by Mike at Experimental Food Blog (hypothetical blog), contacts me, and helpfully informs me that Mike over at EFB experimented with marinades and found that they do make steaks more tender.

Let’s further assume that I already knew about Mike’s article and that I did not consider his experiment or his conclusions valid. Why? Well, it is a far cry from the type of evidence that led me to update the article I mentioned above.

The Scientific Method Vs. The Food Blogger Method

I chose the tenderizing of meat for a reason, and before I begin, I would like to thank Jeff Potter, author of Cooking for Geeks: Real Science, Great Cooks, and Good Food, for reminding me of this perfect example: The Mythbusters, Adam Savage and Jamie Hyneman, performed an experiment to test whether you could tenderize meat with explosives (Season 6, Episode 10). I am a huge, huge fan of Mythbusters, and I still haven’t quite gotten over the show ending.

Despite how silly such a notion may seem, the Mythbusters knew something that Mike and many other food experimenters don’t know: Setting up such an experiment and analyzing the results is not a simple matter. Mike said his steaks were more tender. Why, because he cut into one or two and thought to himself, “Now that is a tender steak?”

You see, there is every chance that had Mike given the steak to a different person to eat, they would have come to a different conclusion. He could have had 5 people try his marinated steaks and non-marinated steaks and gotten results all over the place. And what if, as he added more people to the experiment, the harder it became to pin any definite results to the testing? This is assuming he had more than one participant, which is doubtful.

What is Tenderness? This is the Operating Question.

This is the first thing the Mythbusters had to do. They had to figure out just what tenderness is and how to measure it. According to Adam Savage, they spent a whole day testing and ended up scrapping it. These tests, which were never shown in the finished episode, ended up being a wash because the Mythbusters found they were using the wrong parameters. If the Mythbusters had a hard time with the tests, what are we to assume about Mike at home in his kitchen, laptop at hand?

It turned out that the USDA (United States Department of Agriculture) already had a way of testing steak tenderness that resulted in accurate and repeatable data: a machine that measures how many pounds of force it takes to punch a hole through the meat. Furthermore, the Agricultural Marketing Service of the USDA, in cooperation with ASTM International, has developed a USDA Tenderness Program which is meant to help consumers find specific cuts of beef that meat standards allowing the claims USDA tender or USDA very tender.

Scientific Method To Measure Steak Tenderness

To find whether a cut meets minimum thresholds for tender or very tender claims, Warner Brantzler shear force measurements are used. This measures the amount of force needed to shear through a sample of meat. The measurements can then be compared to national standards. The video below shows such a test being performed.

Munching on a steak and writing down your opinion of its tenderness does not produce accurate, objective data. It produces subjective, and perhaps nonrepeatable, impressions. You can often surmise when such experiments are not valid. You see, when you experiment, you often already have an idea about what your testing will show. In the case of marinades, our own experience and the experience of many professional cooks should tell us that if marinades do enhance tenderness, the difference is small.

Always Question DRAMATIC Results

If Mike’s results are dramatic and he writes of “the most tender steak he’s ever eaten,” we are right to question his methods, and even his honesty. Often, dramatic results that go way beyond what most professionals would expect are seen as more valid and as “proving everyone wrong.”

Experimental results are rarely so dramatic, however. It is usually difficult to tease out the small differences between a test and a control.

The Sunk-Cost Fallacy of Home Science This is why we see so many “Best Steak I Ever Had” claims in home dry-aging or 48-hour marinades. If a cook invests 40 days of fridge space or three hours of active labor into a single meal, they are no longer an objective judge. They have “Skin in the Game.” To admit the steak is just “okay” would be to admit they wasted their time. Their brain provides the flavor that the science didn’t.

The Cola Wars

Do you like Coca-Cola or Pepsi? Pepsi used to run television commercials showing people engaging in blind taste tests. They claimed more people chose Pepsi than Coke. This was never generally true, but even if it were, would these experiments prove that “Pepsi is better than Coke?” Of course not. It is a matter of preference.

If you like Coca-Cola better than Pepsi, then it is better to you, and that is all there is to it. If you think steak is any different, you’ve been watching too many cooking shows on television.

The better steak is the steak you like best. Even if it is not considered “correct” by certain standards. As well, you might enjoy a Coke sometimes but at other times want a Pepsi. I’m one of those odd ducks. Sometimes, I just want a Pepsi. The same could be true of steak, which may be even harder to pin down than cola preferences.

What Defines a Good Steak?

Yes, we can assume that certain standards define a good steak. And we can assert that more people will enjoy a steak that meets these standards. But does this prove it’s better? There is, after all, no right or wrong answer to the question of which steak is better.

So, all tasting can do is indicate preferences, and only then if the same tasting experiments are performed with a sufficiently large number of participants. The results from one or two tasters are no result at all. This means most food experiments you see on YouTube by creators are completely irrelevant.

🥩 What makes a good steak? The question is what makes a good steak to YOU? While it pays to keep your mind open about new ways, food preferences are subjective. There is no “proper” way to cook a steak; there are only preferred ways. I challenge the “grumpy chef” dogma about well-done steaks and debunk the idea that they are always dry and rubbery.

Read More: Is Well-Done Steak Bad?

The Ramsay Trap: Is Optimization Worth Your Time?

We see this frequently with celebrity chefs like Gordon Ramsay, who might spend 30 minutes on a single pot of scrambled eggs, cycling it on and off the heat to achieve a specific, tiny curd size. He has “optimized” the dish to his personal preference.

While this is a valid culinary choice, the “science” behind it is often used to make home cooks feel that anything less is a failure. In reality, for a home cook on a Tuesday morning, the 2-minute egg is a net win, and the 30-minute egg is a chore with diminishing returns. This isn’t science—it’s a performance. I can assure you my Waffle House customers would not have tolerated a slow 30-minute scrambled eggs optimization from me. When they hollered out, “Eric, can you scramble me two more eggs?” they wanted them now, not on Ramsay time.

Sensory Evaluation and Objective Evaluation

Food scientists grapple with this question. Both sensory evaluation and objective evaluation of food quality are important to food science. Sensory evaluation is not objective. Therefore, it requires many, many participants. Having people sit down to compare two different steaks and rate their tenderness would fall under sensory evaluation.

The number of people required to produce meaningful results makes such experiments time-consuming and very expensive. And even then, the results would simply show that one or the other would tend to be more pleasing to consumers or diners.

A Lesson in Variables: The 5-Second Rule The “5-Second Rule” is the ultimate example of a cooking myth that sounds scientific but ignores the most important variables. While people focus on the time the food spent on the floor, real food science shows that surface type and moisture are the true culprits.

Read more: Why the 5-Second Rule is a lesson in cross-contamination variables.

The Problem With Repeatable Results

And then, if you performed the same testing again, lo and behold, you might end up with different results. This is because sensory evaluation, relying on inaccurate and changeable human perceptions, is highly variable and thus difficult to repeat.

The machine that the Mythbusters built to emulate the USDA machine for testing tenderness, however, would fall under the objective evaluation umbrella. They spent around $50 to make their machine and could have tested many samples in one day with dependable and repeatable results.

However, now we encounter a problem. You may have been asking yourself one very pertinent question: Just because a machine says a steak is more tender, does this mean it will be more enjoyable? The answer, of course, is no.

🍳 The Over-Simplification Trap: Egg Yolk Color Many people assume that because a food’s color is a visual trait, it cannot possibly influence taste. While it’s “scientifically” true that the pigment itself is flavorless, this ignores the deeper variables. The specific diet required to produce a deep orange yolk often results in a different fatty acid profile, which does change the flavor.

Read more: Why yolk color is a perfect example of an “Open Question” in food science.

A Machine Cannot Measure Enjoyment

Objective evaluation of food only measures ONE thing at a time. But, if you were a company trying to enhance the tenderness of your packaged meat product, you would need to use objective evaluations to accurately narrow down your choices and then rely on human participants to tell you which methods produced the more acceptable product.

So, in reality, whether a steak is objectively tender is not the same question as whether it will be more acceptable to a majority of people who eat it. And, since it is difficult to even ensure uniform cooking techniques and small variables can produce recognizable changes in the finished product, it would be difficult for a home cook to approach the kind of scientific rigor of a food company employing trained food scientists.

The Mythbusters did indeed “confirm” that explosives could be used to tenderize a steak. They also found that steak could be tenderized in a clothes dryer.

And this, I hope, answers the question for those readers who doubt I have considered all the sources when I “bust a myth” or attempt to evaluate it. I also hope it makes you a bit more skeptical of those who claim to bust cooking myths by testing them. You’ll notice that I do not, and I think you can see why now.

Outfits like America’s Test Kitchen and some other oft-mentioned sources can be entertaining and very informative, but they are not valid scientific sources. They often do not have any means of objective evaluation and have far too few participants for any reliable standard of sensory evaluation.

The Subjectivity of ‘Success’

They also fail to operationalize their terms. Many readers questioned my advice that it is not practical to dry age your own steaks at home. They told me they had done it themselves with great success—but what does ‘success’ mean in this context?

In a lab, success is measured by a 15% concentration of specific flavor compounds or a quantifiable decrease in shear force. In a home kitchen, ‘success’ usually just means the cook didn’t get sick and the steak tasted pretty good. Without a control sample to taste blindly alongside the dry-aged version, the cook is simply confirming their own hard work.”

In the end, what we are left with is the opinion of one or a few cooks who tried two or three different ways of cooking something. If you try the experiment yourself, your results may vary! While food bloggers are concerned with busting cooking myths, food scientists are concerned with improving food quality, shelf-life, and consumer satisfaction, among other things. Often, however, truly rigorous experimentation has been performed and a dedicated search can often turn up published results from scientific journals.

The Lab vs. The Legacy: Science and Collective Experience

Many other food and cooking myths are not put to rest based on cooking experiments but based on explanations of the underlying science. The answer may be found in the experience of professional chefs and home cooks that have been honed for generations.

If a certain cooking fact or ingredient reaction seems to violate known properties, we can often, through relying on scientific explanations that themselves rely on other sources of evidence, bust a myth without having to pick up a knife or turn on a stove.

This is where many modern ‘science-based’ influencers lose the plot. They start with the premise that because a technique hasn’t been tested in a modern kitchen lab, it must be a ‘myth’ waiting to be debunked. But what is their initial hypothesis?

A hypothesis is supposed to be a testable prediction based on existing evidence. But in the world of ‘culinary theater,’ the hypothesis is usually just an unvetted assumption. They assume the ‘old way’ is wrong, assume their ‘new way’ is better, and then perform a demonstration to prove themselves right. That is the literal definition of Confirmation Bias, the very thing science is designed to prevent

A perfect example of this occurs in The Food Lab, where the author describes questioning his first restaurant chef about their French fry method. The restaurant used the traditional two-stage fry (par-cooking at a lower temp, then crisping at a high temp). The author asked: Why don’t we just boil them in water first and then fry them?

He characterizes the chef’s dismissal of the question as an ‘anti-curiosity’ stance that sparked his interest in food science. But as any former short-order cook or kitchen manager can tell you, the chef wasn’t being anti-science; he was being practical. > Starting fries in boiling water in a professional kitchen is a logistical nightmare:

- Equipment & Space: You’d need a dedicated burner and a massive stockpot of boiling water, taking up precious ‘real estate’ on the line.

- Structural Integrity: Par-boiled potatoes are fragile; transferring them from water to a fryer basket would turn half your batch into mush.

- The Physics of Danger: You would be introducing dripping wet potatoes into a vat of 375°F oil. That isn’t ‘science’—it’s a recipe for a grease fire and a trip to the ER.

The chef didn’t need a lab to know the idea was silly. He had the ‘Big Data’ of professional experience. Ignoring that experience doesn’t make you a better scientist; it just makes you a more dangerous cook.

Kenji’s French fry story illustrates a fundamental lack of scientific cognition. In a real lab, an assumption is something you identify so you can control for it. You don’t just ‘assume’ the fries will be better; you acknowledge that by boiling them, you are introducing new variables like moisture content and starch gelatinization that could ruin the final product.

When an influencer treats an assumption as a ‘bold new hypothesis’ without accounting for the logistical and physical variables it creates, they aren’t doing an experiment, they are just making a guess and calling it science.

Usually, it’s a rejection of collective experience. If generations of French grandmothers or Waffle House short-order cooks have done something a certain way for 100 years, that is a form of data. It’s a massive, multi-generational sample size. To ignore that in favor of a one-day ‘experiment’ in your own kitchen isn’t being a scientist, it’s being a contrarian.

The “I Worked in the Industry” Shield

Another common hurdle is the vague appeal to authority: “I’ve worked in the industry.” People often use this as a conversational shield to shut down a debate without providing a single specific fact.

True experience is granular. Someone who actually understands grocery store logistics will talk about specific supply chain protocols or SKU management. A person who says, “I worked in the grocery industry” might have just stocked shelves for a summer—a job that provides zero insight into high-level logistics.

We see this in the culinary world, too. A cook who worked at one restaurant for six months will often generalize their experience to “how all restaurants work.” Even influencers like Kenji often rely on past “experience” as a substitute for scientific cognition. They use a professional veneer to hide a lack of rigorous methodology, assuming that because they were “in the industry,” their subjective observations are now immutable laws of science.

Does It Need to be Proven? The Bold Prediction Problem

But even when these ‘industry experts’ try to apply a veneer of science, they fail to hit the most important benchmark of actual research: The Bold Prediction. This problem is apparent in the scientific methods used by Lopez and other sources. In real science, a researcher takes a risk by making a specific, falsifiable prediction. If I am right, X will happen. However, if Y happens instead, my hypothesis is wrong. This willingness to be proven incorrect is the very heart of the scientific method.

In culinary theater, however, no such risk is taken. The influencer never makes a prediction that could actually fail. Instead, they perform a ‘demonstration.’ The outcome is either guaranteed by basic physics or is so subjective that they can simply declare victory regardless of the result. If you aren’t risking a ‘fail’ state, you aren’t doing an experiment, you’re just filming a rehearsal with a predetermined conclusion. This lack of risk is why so many cooking myths are allowed to persist under the guise of ‘scientific’ testing.

We can never claim to have proven anything until a proper experiment is done. However, when the underlying natural laws are fairly clear, as they often are, it may not be worth getting into a tizzy over, unless you simply enjoy experimenting with food. Just realize that your results will probably be subjective and your experiment unscientific.

Science Experiments Versus Demonstrations

The final thing to recognize is that most ‘food experiments’ are not experiments at all. As I previously stated, they are science demonstrations. I once complained that my son came in second place for his ‘science project’ at school when the winning student simply performed a common demonstration, with expected results, but my son, with my help, performed a true scientific investigation with novel results.

We tested whether ants preferred sugar, bread crumbs, dried bits of chicken, or other sources of food. To our surprise, ants prefer chicken or other meat! And we were sure they would go for the sugar. When I was homeschooling my son, we did a food science project. We made a pH-testing fluid from purple cabbage. Pretty cool! But this was no experiment; it was simply a demonstration of a known concept.

🔥The Ultimate Culinary Theater: Flambé Perhaps no technique is more performative—and less scientific—than flambé. While the belief is that the flame instantly removes the alcohol, the data tells a different story. Rigorous testing shows that simple heating actually removes more ethanol than the fire does. While significant amounts of alcohol can remain after cooking, these amounts are often statistically significant but not practically so. You aren’t going to get a “buzz” from a vodka sauce, but the flambé itself remains a spectacle that provides a visual “wow” while doing almost nothing for the actual chemistry of the dish.

So, while you may come across many ‘food experiments’ you can do at home, remember that these are not truly experiments but simply demonstrations of scientific phenomena with expected results. This is not the kind of food science I have discussed in this article.

Science is a tool for discovery, not a costume for content. Next time you see a ‘revolutionary’ cooking hack, ask yourself: Is this an experiment designed to find the truth, or a demonstration designed to find an audience?